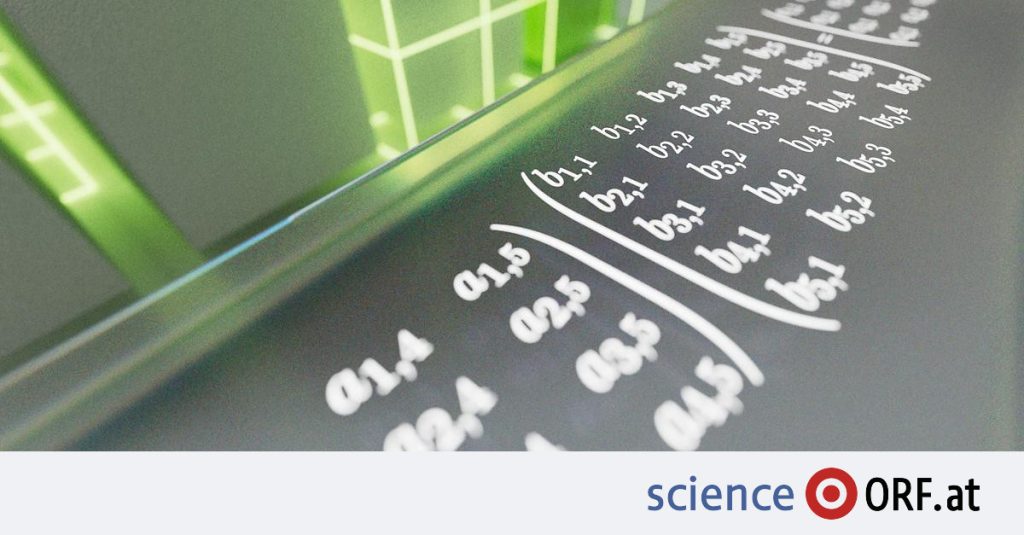

Image processing on a mobile phone, graphics in computer games, speech recognition, and even weather simulations depend on certain mathematical operations: matrix multiples. Depending on the size of the matrices that will be multiplied with each other, this task is more or less complicated.

In order to quickly get the correct result when multiplying the matrix, you need the most efficient calculation methods and algorithms. For several centuries, little progress was made in this field until the German mathematician Volker Strasse In 1969 he showed that matrix multiplication can be solved in fewer steps than previously thought. invented the mathematician Route algorithm.

The system learns efficiency

More than 50 years later, the British company found the adaptive AlphaTensor deep mind Now more efficient algorithms for matrix multiplications that are relatively small and therefore actually relevant.

Artificial intelligence has succeeded in doing this Enhanced deep learning In a type of game, according to experts at DeepMind, it has similarities with chess – with many possible moves and a three-dimensional playing field. With each step or computational step, the system analyzes whether it is closer to solving the task than before. In this way, you learn to gradually find the most efficient algorithms.

Overrides the current search state

At the beginning of the investigation, the AlphaTensor system was not provided with any information about matrix multiplications. All algorithms from the system are based on their own experience and on previously made calculations. AlphaTensor was then able to quickly create algorithms that actually correspond to the current state of the search. Soon, the system was able to bypass them.

The experts from DeepMind are now introducing the system in a new way study in Nature magazine. Matrix calculations, which include a hundred multiplications using traditional methods, have already been solved in 80 multiplications with great effort and human knowledge. AlphaTensor succeeded in only 76 hits.

Chess trials and launch

DeepMind has already proven many times that amazing things can be achieved through reinforcement learning. About five years ago, AlphaZero was able to teach itself to play chess in just a few hours, then easily outperformed the best computer chess program at the time.

DeepMind’s AI has also triumphed over GO in the past, even though the game has been a challenge for PCs for decades. With matrix multiplication and AlphaTensor, the number of possibilities and possible “moves” is now over 30 orders of magnitude (x 1030) higher.

For more efficiency

With AlphaTensor, experts want to explore how efficiently some matrix multiplications can be solved. According to them, the results obtained so far can indeed lead to “significantly greater efficiency and speed” in some computer programs and computational tasks.

According to the authors, AlphaTensor can also find algorithms that are optimized for use with specific hardware, and therefore run 10 to 20 percent faster on that hardware—for example, proprietary graphics cards.

Overall, the authors hope that AlphaTensor will serve as a basis for finding as efficient algorithms as possible for computational tasks and mathematical problems unrelated to matrices in the future.

“Social media evangelist. Baconaholic. Devoted reader. Twitter scholar. Avid coffee trailblazer.”

More Stories

These brands are most vulnerable to phishing scams

Apple Maps Now Has a Web Version and Wants to Challenge Google Maps

Best AirDrop Alternatives for Android